AI Content Batch Creation Guide: The Essential Workflow for Content Creators

TL; DR Key Takeaways

- Among over 207 million content creators worldwide, 91% are already using generative AI to boost content production efficiency, with power users seeing a 3-5x increase in productivity.

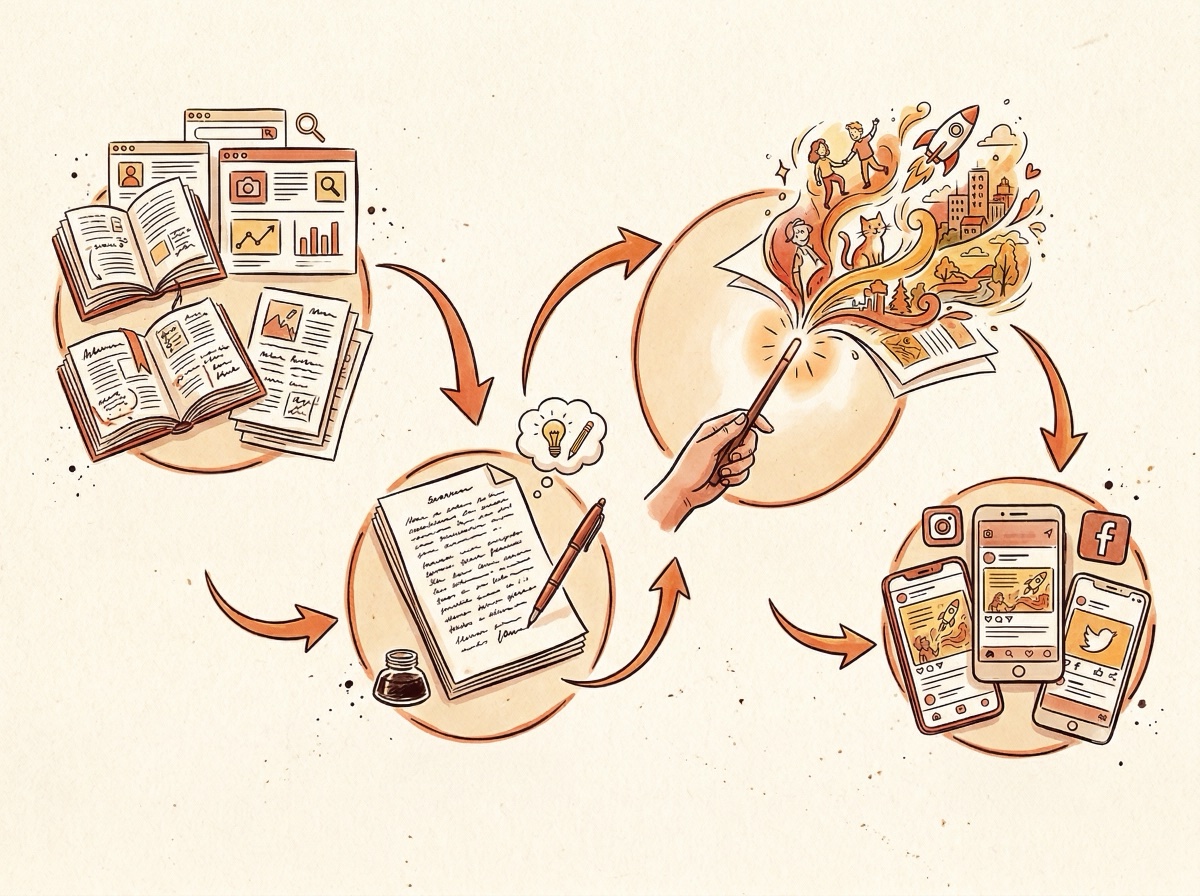

- The core of AI batch image-text creation is not "finding one good tool," but building a complete workflow of "material collection → story generation → illustration production → multi-platform distribution."

- Image-text content such as children's picture books, science popularization, and knowledge cards are the best entry points for AI batch creation. It has become a reality for a single person to produce 10-20 sets of high-quality image-text content per day.

- Character consistency, style unity, and copyright compliance are the three key challenges in AI image-text creation; specific solutions are provided in the text.

Your Content Production Speed is Being Left Behind by Peers

A brutal fact: while you are still repeatedly modifying illustrations for a single image-text post, your competitors may have already completed an entire week's content schedule using AI tools.

According to industry data from early 2026, the global AI content creation market has reached $24.08 billion, a year-on-year increase of over 21% 1. Even more noteworthy are the changes in the domestic market: self-media teams deeply applying AI have increased content production efficiency by an average of 3-5 times. The process of topic planning, material gathering, and image-text design that used to take a week can now be shortened to 1-2 days 2.

This article is suitable for self-media operators and image-text content creators looking for AI content creation tools, as well as creators who want to use AI to generate picture books, children's stories, and other image-text content. You will obtain a proven AI batch image-text creation workflow, with specific operational guidance for every step from material collection to finished product output.

Why "Image-Text Content" is the Best Starting Point for AI Batch Creation

When many creators first encounter AI content creation tools, they try to write long articles or make videos directly. However, from an ROI perspective, image-text content is the category where AI batch creation is easiest to succeed.

There are three reasons. First, the production chain for image-text content is short. A set of image-text content only requires two core elements: "copywriting + illustrations," and AI is already mature enough in both areas. Second, image-text content has a high fault tolerance. If an AI-generated illustration has minor flaws, it will hardly be noticed in a social media feed, but if an AI-generated video shows character distortion, viewers will notice immediately. Third, image-text content has many distribution channels. The same set of images and text can be published simultaneously on platforms like Xiaohongshu, WeChat Official Accounts, Zhihu, and Douyin, with extremely low marginal costs.

Children's picture books and science popularization are two niches particularly suited for AI batch creation. Taking children's picture books as an example, a widely discussed practical case on Zhihu shows a creator using ChatGPT to generate story copy and Midjourney to generate illustrations, successfully listing the AI-generated children's book Alice and Sparkle on Amazon 3. Domestically, creators have also used the combination of "Doubao + Jimeng AI" to run children's story accounts on Xiaohongshu, gaining over 100,000 followers in a single month.

The common logic behind these cases is: the technology for AI children's story generation and AI picture book generation has matured enough to support commercial operations. The key lies in whether you have an efficient workflow.

Four Core Challenges of Batch Image-Text Creation

Before you rush into action, understand the four most common pitfalls in AI batch image-text creation. These issues are repeatedly mentioned in the Reddit r/KDP community and creator discussions on Zhihu 4.

Challenge 1: Character Consistency. This is the biggest headache when generating picture book content with AI. You ask the AI to draw a little girl in a red hat; the first image shows a round face with short hair, while the second might turn into long hair with big eyes. Illustration analyst Sachin Kamath on X (Twitter), after studying over 1,000 AI picture book illustrations, pointed out that creators often focus only on whether a style "looks good" while ignoring the more critical issue of "can it stay consistent."

Challenge 2: Overextended Toolchains. A typical AI image-text creation process might involve 5-6 different tools: using ChatGPT for copy, Midjourney for images, Canva for layout, CapCut for captions, and then various platform backends for publishing. Every time you switch tools, your creative flow is interrupted, resulting in a massive loss of efficiency.

Challenge 3: Quality Fluctuations. The quality of AI-generated content is unstable. The same prompt might generate a stunning image today and a bizarre six-fingered hand tomorrow. When creating in batches, the time cost of quality control is often underestimated.

Challenge 4: Copyright Gray Areas. A 2025 report from the U.S. Copyright Office clearly stated that purely AI-generated content does not qualify for copyright protection without sufficient human creative contribution 5. This means if you plan to use AI-generated picture book content for commercial publishing, you must ensure there is enough manual editing and creative input.

Five Steps to Build Your AI Batch Image-Text Creation Workflow

Having understood the challenges, here is a battle-tested five-step workflow. The core idea of this process is to use a workspace that is as unified as possible to complete the entire flow, reducing efficiency loss caused by tool switching.

Step 1: Establish a Material Inspiration Library. The prerequisite for batch creation is having enough material reserves. You need a place to centrally save competitor analysis, trending topics, reference images, and style samples. Many creators use browser bookmarks or WeChat favorites, but these contents are scattered and impossible to find when needed. A better approach is to use a specialized knowledge management tool to archive webpages, PDFs, images, and videos in one place, and use AI for quick retrieval and Q&A. For example, in YouMind, you can save viral posts from competitors, picture book style references, and target audience analysis reports into a single Board. Later, you can directly ask the AI, "What are the most common character settings in these picture books?" or "Which color scheme has the highest engagement rate for parenting accounts?" The AI will provide an analysis based on all the materials you've collected.

Step 2: Batch Generate Copywriting Frameworks. Once you have a material library, the next step is to batch generate content copy. Using children's stories as an example, you can first determine a series theme (e.g., "The Four Seasons Adventures of the Little Fox"), and then use AI to generate 10-20 story outlines at once, each containing a protagonist, setting, conflict, and resolution. A key tip is to define a Character Sheet in the prompt, including the character's appearance, personality traits, and catchphrases, so that consistency can be maintained when generating illustrations later.

Step 3: Generate Illustrations with Unified Style. This is the most technical part of the workflow. AI image generation tools in 2026 are already better at handling character consistency. Operationally, it is recommended to first use a prompt to generate a Character Reference image, and then reference this in the prompt for every subsequent illustration. Tools that currently support this workflow include Midjourney (via the --cref parameter) and Recraft AI (via the style lock feature). YouMind's built-in image generation capabilities support multiple models such as Nano Banana Pro, Seedream 4.5, and GPT Image 1.5. You can compare the output of different models in the same workspace and choose the one that best fits your content style without jumping between multiple websites.

Step 4: Assembly and Quality Audit. After assembling the copy and illustrations into complete image-text content, a manual audit is mandatory. Focus on three aspects: whether the character's appearance is consistent across different scenes, whether there are common AI logical errors in the copy (such as contradictory plots), and whether there are obvious AI artifacts in the images (extra fingers, distorted text, etc.). This step cannot be skipped; it determines whether your content is "AI trash" or "AI-assisted high-quality content."

Step 5: Multi-platform Adaptation and Distribution. The same set of image-text content requires different formats for different platforms. Xiaohongshu prefers vertical images (3:4) with short copy, WeChat Official Accounts need horizontal cover images with long articles, and Douyin image-text posts require 9:16 vertical images with captions. When creating in batches, it is recommended to generate versions in multiple ratios during the image generation stage rather than cropping them afterward.

How to Choose AI Image-Text Creation Tools

The number of AI content creation tools on the market is vast, with TechTarget listing over 35 in its 2026 review 6. For batch image-text creation scenarios, you should focus on three dimensions when choosing a tool: whether it supports integrated image-text creation (completing copy and images on the same platform), whether it supports switching between multiple models (different models excel at different styles), and whether it has workflow automation capabilities (reducing repetitive operations).

Tool | Best Scenario | Free Version | Core Advantage |

|---|---|---|---|

Full material research + image-text creation flow | ✅ | Multi-model image generation + Knowledge management + Agent workflows; one-stop from material collection to output | |

Layout and template design | ✅ | Massive templates, great for quick layout, but limited AI image generation | |

Specialized children's picture book creation | Trial credits | Focused on picture books with good character consistency, but limited to that category | |

Personalized children's storybooks | ✅ | Simple to use, suitable for parents and teachers, but weak batch creation capabilities |

It should be noted that YouMind currently excels in the complete "research to creation" chain. If your need is simply to generate a single illustration, specialized tools like Midjourney may have an advantage in image quality. YouMind's unique value lies in the fact that you can complete material collection, AI Q&A research, copywriting, multi-model image generation, and even create automated workflows through the Skills feature in a single workspace, turning repetitive creative steps into one-click Agent tasks.

FAQ

Q: Can AI-generated children's picture books be used commercially?

A: Yes, but with conditions. The 2025 U.S. Copyright Office guidelines indicate that AI-generated content needs "sufficient human creative contribution" to obtain copyright protection. In practice, you need to substantially edit the AI-generated copy, adjust and recreate the illustrations, and keep a complete record of the creative process. When publishing on platforms like Amazon KDP, you must truthfully label it as AI-assisted creation.

Q: How many sets of image-text content can one person produce per day using AI?

A: It depends on the content type and quality requirements. For children's story content, once a mature workflow is established, it is achievable for one person to produce 10-20 sets per day (each set containing 6-8 illustrations + complete copy). However, this figure assumes you already have stable character settings, style templates, and quality audit processes. When starting out, it is recommended to begin with 3-5 sets per day and gradually optimize the process.

Q: Will AI image-text content be throttled by platforms?

A: Google's 2025 official guidelines clearly state that search rankings focus on content quality and E-E-A-T signals (Experience, Expertise, Authoritativeness, Trustworthiness), rather than whether the content was generated by AI 7. Domestic platforms hold a similar stance: as long as the content is valuable to users and not low-quality batch spam, AI-assisted content will not be specifically throttled. The key is to ensure every piece of content undergoes manual review and personalized adjustment.

Q: What are the startup costs for an AI picture book account?

A: You can start with almost zero cost. Most AI content creation tools offer free credits, enough for you to complete initial testing and workflow setup. Once you have validated the content direction and audience feedback, you can choose a paid plan based on your production needs. For example, the free version of YouMind already includes basic image generation and document creation capabilities, while paid plans offer more model choices and higher usage limits.

Summary

In 2026, AI batch image-text creation is no longer a question of "can it be done," but "how to do it more efficiently than others."

Keep three core points in mind. First, the workflow is more important than any single tool. Instead of spending time comparing which AI image tool is best, spend time building a complete process from material collection to distribution. Second, manual review is the quality baseline. AI is responsible for speed, and humans are responsible for oversight; this division of labor will not change in the foreseeable future. Third, start small and iterate quickly. Choose a niche category (like children's bedtime stories), run the process with the simplest tool combination, and then gradually optimize and expand.

If you are looking for a platform that covers the entire "material research → copywriting → AI image generation → workflow automation" chain, you can try YouMind for free and start building your image-text content production line from a single Board.

References

[1] Global Generative AI in Content Creation Market Size Report (2026-2035)

[2] AI Reshaping the Self-Media Ecosystem: 2025 Trends, Strategies, and Practice White Paper

[3] AI Children's Picture Books Are Viral: Gameplay and Case Analysis

[4] Reddit r/KDP: Discussion on Best AI Tools for Children's Book Illustration

[5] How to Build an AI Children's Book Illustration Generator (MindStudio Tutorial)

[6] 35 AI Content Generators to Explore in 2026 (TechTarget)

[7] Top AI Content Creation Platforms in 2026 (Clarity Ventures)

Have questions about this article?

Ask AI for FreeRelated Posts

GPT Image 2 Leak Hands-on: Does It Beat Nano Banana Pro in Blind Tests?

TL;DR Key Takeaways On April 4, 2024, independent developer Pieter Levels (@levelsio) was the first to break the news on X: three mysterious image generation models appeared on the Arena blind testing platform, codenamed maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. While these names sound like a hardware store's tape aisle, the quality of the generated images sent the AI community into a frenzy. This article is for creators, designers, and tech enthusiasts following the latest trends in AI image generation. If you have used Nano Banana Pro or GPT Image 1.5, this post will help you quickly understand the true capabilities of the next-generation model. A discussion thread in the Reddit r/singularity sub gained 366 upvotes and over 200 comments within 24 hours. User ThunderBeanage posted: "From my testing, this model is absolutely insane, far beyond Nano Banana." A more critical clue: when users directly asked the model about its identity, it claimed to be from OpenAI. Image Source: @levelsio's initial leak of the GPT Image 2 Arena blind test screenshot If you frequently use AI to generate images, you know the struggle: getting a model to correctly render text has always been a maddening challenge. Spelling errors, distorted letters, and chaotic layouts are common issues across almost all image models. GPT Image 2's breakthrough in this area is the central focus of community discussion. @PlayingGodAGI shared two highly convincing test images: one is an anatomical diagram of the anterior human muscles, where every muscle, bone, nerve, and blood vessel label reached textbook-level precision; the other is a YouTube homepage screenshot where UI elements, video thumbnails, and title text show no distortion. He wrote in his tweet: "This eliminates the last flaw of AI-generated images." Image Source: Comparison of anatomical diagram and YouTube screenshot shown by @PlayingGodAGI @avocadoai_co's evaluation was even more direct: "The text rendering is just absolutely insane." @0xRajat also pointed out: "This model's world knowledge is scary good, and the text rendering is near perfect. If you've used any image generation model, you know how deep this pain point goes." Image Source: Website interface restoration results independently tested by Japanese blogger @masahirochaen Japanese blogger @masahirochaen also conducted independent tests, confirming that the model performs exceptionally well in real-world descriptions and website interface restoration—even the rendering of Japanese Kana and Kanji is accurate. Reddit users noticed this as well, commenting that "what impressed me is that the Kanji and Katakana are both valid." This is the question everyone cares about most: Has GPT Image 2 truly surpassed Nano Banana Pro? @AHSEUVOU15 performed an intuitive three-image comparison test, placing outputs from Nano Banana Pro, GPT Image 2 (from A/B testing), and GPT Image 1.5 side-by-side. Image Source: Three-image comparison by @AHSEUVOU15; from right to left: NBP, GPT Image 2, GPT Image 1.5 @AHSEUVOU15's conclusion was cautious: "In this case, NBP is still better, but GPT Image 2 is definitely a significant improvement over 1.5." This suggests the gap between the two models is now very small, with the winner depending on the specific type of prompt. According to in-depth reporting by OfficeChai, community testing revealed more details : @socialwithaayan shared beach selfies and Minecraft screenshots that further confirmed these findings, summarizing: "Text rendering is finally usable; world knowledge and realism are next level." Image Source: GPT Image 2 Minecraft game screenshot generation shared by @socialwithaayan [9](https://x.com/socialwithaayan/status/2040434305487507475) GPT Image 2 is not without its weaknesses. OfficeChai reported that the model still fails the Rubik's Cube reflection test. This is a classic stress test in the field of image generation, requiring the model to understand mirror relationships in 3D space and accurately render the reflection of a Rubik's Cube in a mirror. Reddit user feedback echoed this. One person testing the prompt "design a brand new creature that could exist in a real ecosystem" found that while the model could generate visually complex images, the internal spatial logic was not always consistent. As one user put it: "Text-to-image models are essentially visual synthesizers, not biological simulation engines." Additionally, early blind test versions (codenamed Chestnut and Hazelnut) reported by 36Kr previously received criticism for looking "too plastic." However, judging by community feedback on the latest "tape" series, this issue seems to have been significantly improved. The timing of the GPT Image 2 leak is intriguing. On March 24, 2024, OpenAI announced the shutdown of Sora, its video generation app, just six months after its launch. Disney reportedly only learned of the news less than an hour before the announcement. At the time, Sora was burning approximately $1 million per day, with user numbers dropping from a peak of 1 million to fewer than 500,000. Shutting down Sora freed up a massive amount of compute power. OfficeChai's analysis suggests that next-generation image models are the most logical destination for this compute. OpenAI's GPT Image 1.5 had already topped the LMArena image leaderboard in December 2025, surpassing Nano Banana Pro. If the "tape" series is indeed GPT Image 2, OpenAI is doubling down on image generation—the "only consumer AI field still likely to achieve viral mass adoption." Notably, the three "tape" models have now been removed from LMArena. Reddit users believe this could mean an official release is imminent. Combined with previously circulated roadmaps, the new generation of image models is highly likely to launch alongside the rumored GPT-5.2. Although GPT Image 2 is not yet officially live, you can prepare now using existing tools: Note that model performance in Arena blind tests may differ from the official release version. Models in the blind test phase are usually still being fine-tuned, and final parameter settings and feature sets may change. Q: When will GPT Image 2 be officially released? A: OpenAI has not officially confirmed the existence of GPT Image 2. However, the removal of the three "tape" codename models from Arena is widely seen by the community as a signal that an official release is 1 to 3 weeks away. Combined with GPT-5.2 release rumors, it could launch as early as mid-to-late April 2024. Q: Which is better, GPT Image 2 or Nano Banana Pro? A: Current blind test results show both have their advantages. GPT Image 2 leads in text rendering, UI restoration, and world knowledge, while Nano Banana Pro still offers better overall image quality in some scenarios. A final conclusion will require larger-scale systematic testing after the official version is released. Q: What is the difference between maskingtape-alpha, gaffertape-alpha, and packingtape-alpha? A: These three codenames likely represent different configurations or versions of the same model. From community testing, maskingtape-alpha performed most prominently in tests like Minecraft screenshots, but the overall level of the three is similar. The naming style is consistent with OpenAI's previous gpt-image series. Q: Where can I try GPT Image 2? A: GPT Image 2 is not currently publicly available, and the three "tape" models have been removed from Arena. You can follow to wait for the models to reappear, or wait for the official OpenAI release to use it via ChatGPT or the API. Q: Why has text rendering always been a challenge for AI image models? A: Traditional diffusion models generate images at the pixel level and are naturally poor at content requiring precise strokes and spacing, like text. The GPT Image series uses an autoregressive architecture rather than a pure diffusion model, allowing it to better understand the semantics and structure of text, leading to breakthroughs in text rendering. The leak of GPT Image 2 marks a new phase of competition in the field of AI image generation. Long-standing pain points like text rendering and world knowledge are being rapidly addressed, and Nano Banana Pro is no longer the only benchmark. Spatial reasoning remains a common weakness for all models, but the speed of progress is far exceeding expectations. For AI image generation users, now is the best time to build your own evaluation system. Use the same set of prompts for cross-model testing and record the strengths of each model so that when GPT Image 2 officially goes live, you can make an accurate judgment immediately. Want to systematically manage your AI image prompts and test results? Try to save outputs from different models to the same Board for easy comparison and review. [1] [2] [3] [4] [5] [6] [7] [8] [9] [10]

Jensen Huang Announces "AGI Is Here": Truth, Controversy, and In-depth Analysis

TL; DR Key Takeaways On March 23, 2026, a piece of news exploded across social media. NVIDIA CEO Jensen Huang uttered those words on the Lex Fridman podcast: "I think we've achieved AGI." This tweet posted by Polymarket garnered over 16,000 likes and 4.7 million views, with mainstream tech media like The Verge, Forbes, and Mashable providing intensive coverage within hours. This article is for all readers following AI trends, whether you are a technical professional, an investor, or a curious individual. We will fully restore the context of this statement, deconstruct the "word games" surrounding the definition of AGI, and analyze what it means for the entire AI industry. But if you only read the headline to draw a conclusion, you will miss the most important part of the story. To understand the weight of Huang's statement, one must first look at its prerequisites. Podcast host Lex Fridman provided a very specific definition of AGI: whether an AI system can "do your job," specifically starting, growing, and operating a tech company worth over $1 billion. He asked Huang how far away such an AGI is—5 years? 10 years? 20 years? Huang's answer was: "I think it's now." An in-depth analysis by Mashable pointed out a key detail. Huang told Fridman: "You said a billion, and you didn't say forever." In other words, in Huang's interpretation, if an AI can create a viral app, make $1 billion briefly, and then go bust, it counts as having "achieved AGI." He cited OpenClaw, an open-source AI Agent platform, as an example. Huang envisioned a scenario where an AI creates a simple web service that billions of people use for 50 cents each, and then the service quietly disappears. He even drew an analogy to websites from the dot-com bubble era, suggesting that the complexity of those sites wasn't much higher than what an AI Agent can generate today. Then, he said the sentence ignored by most clickbait headlines: "The odds of 100,000 of those agents building NVIDIA is zero percent." This isn't a minor footnote. As Mashable commented: "That's not a small caveat. It's the whole ballgame." Jensen Huang is not the first tech leader to declare "AGI achieved." To understand this statement, it must be placed within a larger industry narrative. In 2023, at the New York Times DealBook Summit, Huang gave a different definition of AGI: software that can pass various tests approximating human intelligence at a reasonably competitive level. At the time, he predicted AI would reach this standard within 5 years. In December 2025, OpenAI CEO Sam Altman stated "we built AGIs," adding that "AGI kinda went whooshing by," with its social impact being much smaller than expected, suggesting the industry shift toward defining "superintelligence." In February 2026, Altman told Forbes: "We basically have built AGI, or very close to it." But he later added that this was a "spiritual" statement, not a literal one, noting that AGI still requires "many medium-sized breakthroughs." See the pattern? Every "AGI achieved" declaration is accompanied by a quiet downgrade of the definition. OpenAI's founding charter defines AGI as "highly autonomous systems that outperform humans at most economically valuable work." This definition is crucial because OpenAI's contract with Microsoft includes an AGI trigger clause: once AGI is deemed achieved, Microsoft's access rights to OpenAI's technology will change significantly. According to Reuters, the new agreement stipulates that an independent panel of experts must verify if AGI has been achieved, with Microsoft retaining a 27% stake and enjoying certain technology usage rights until 2032. When tens of billions of dollars are tied to a vague term, "who defines AGI" is no longer an academic question but a commercial power play. While tech media reporting remained somewhat restrained, reactions on social media spanned a vastly different spectrum. Communities like r/singularity, r/technology, and r/BetterOffline on Reddit quickly saw a surge of discussion threads. One r/singularity user's comment received high praise: "AGI is not just an 'AI system that can do your job'. It's literally in the name: Artificial GENERAL Intelligence." On r/technology, a developer claiming to be building AI Agents for automating desktop tasks wrote: "We are nowhere near AGI. Current models are great at structured reasoning but still can't handle the kind of open-ended problem solving a junior dev does instinctively. Jensen is selling GPUs though, so the optimism makes sense." Discussions on Chinese Twitter/X were equally active. User @DefiQ7 posted a detailed educational thread clearly distinguishing AGI from current "specialized AI" (like ChatGPT or Ernie Bot), which was widely shared. The post noted: "This is nuclear-level news for the tech world," but also emphasized that AGI implies "cross-domain, autonomous learning, reasoning, planning, and adapting to unknown scenarios," which is beyond the current scope of AI capabilities. Discussions on r/BetterOffline were even sharper. One user commented: "Which is higher? The number of times Trump has achieved 'total victory' in Iran, or the number of times Jensen Huang has achieved 'AGI'?" Another user pointed out a long-standing issue in academia: "This has been a problem with Artificial Intelligence as an academic field since its very inception." Faced with the ever-changing AGI definitions from tech giants, how can the average person judge how far AI has actually progressed? Here is a practical framework for thinking. Step 1: Distinguish between "Capability Demos" and "General Intelligence." Current state-of-the-art AI models indeed perform amazingly on many specific tasks. GPT-5.4 can write fluid articles, and AI Agents can automate complex workflows. However, there is a massive chasm between "performing well on specific tasks" and "possessing general intelligence." An AI that can beat a world champion at chess might not even be able to "hand me the cup on the table." Step 2: Focus on the qualifiers, not the headlines. Huang said "I think," not "We have proven." Altman said "spiritual," not "literal." These qualifiers aren't modesty; they are precise legal and PR strategies. When tens of billions of dollars in contract terms are at stake, every word is carefully weighed. Step 3: Look at actions, not declarations. At GTC 2026, NVIDIA released seven new chips and introduced DLSS 5, the OpenClaw platform, and the NemoClaw enterprise Agent stack. These are tangible technical advancements. However, Huang mentioned "inference" nearly 40 times in his speech, while "training" was mentioned only about 10 times. This indicates the industry's focus is shifting from "building smarter AI" to "making AI execute tasks more efficiently." This is engineering progress, not an intelligence breakthrough. Step 4: Build your own information tracking system. The information density in the AI industry is extremely high, with major releases and statements every week. Relying solely on clickbait news feeds makes it easy to be misled. It is recommended to develop a habit of reading primary sources (such as official company blogs, academic papers, and podcast transcripts) and using tools to systematically save and organize this data. For example, you can use the Board feature in to save key sources, and use AI to ask questions and cross-verify the data at any time, avoiding being misled by a single narrative. Q: Is the AGI Jensen Huang is talking about the same as the AGI defined by OpenAI? A: No. Huang answered based on the narrow definition proposed by Lex Fridman (AI being able to start a $1 billion company), whereas the AGI definition in OpenAI's charter is "highly autonomous systems that outperform humans at most economically valuable work." There is a massive gap between the two standards, with the latter requiring a scope of capability far beyond the former. Q: Can current AI really operate a company independently? A: Not currently. Huang himself admitted that while an AI Agent might create a short-lived viral app, "the odds of building NVIDIA is zero." Current AI excels at structured task execution but still relies heavily on human guidance in scenarios requiring long-term strategic judgment, cross-domain coordination, and handling unknown situations. Q: What impact will the achievement of AGI have on everyday jobs? A: Even by the most optimistic definitions, the impact of current AI is primarily seen in improving the efficiency of specific tasks rather than fully replacing human work. Sam Altman also admitted in late 2025 that AGI's "social impact is much smaller than expected." In the short term, AI is more likely to change the way we work as a powerful assistant tool rather than directly replacing roles. Q: Why are tech CEOs so eager to declare that AGI has been achieved? A: The reasons are multifaceted. NVIDIA's core business is selling AI compute chips; the AGI narrative maintains market enthusiasm for investment in AI infrastructure. OpenAI's contract with Microsoft includes AGI trigger clauses, where the definition of AGI directly affects the distribution of tens of billions of dollars. Furthermore, in capital markets, the "AGI is coming" narrative is a major pillar supporting the high valuations of AI companies. Q: How far is China's AI development from AGI? A: China has made significant progress in the AI field. As of June 2025, the number of generative AI users in China reached 515 million, and large models like DeepSeek and Qwen have performed excellently in various benchmarks. However, AGI is a global technical challenge, and currently, there is no AGI system widely recognized by the global academic community. The market size of China's AI industry is expected to have a compound annual growth rate of 30.6%–47.1% from 2025 to 2035, showing strong momentum. Jensen Huang's "AGI achieved" statement is essentially an optimistic expression based on an extremely narrow definition, rather than a verified technical milestone. He himself admitted that current AI Agents are worlds away from building truly complex enterprises. The phenomenon of repeatedly "moving the goalposts" for the definition of AGI reveals the delicate interplay between technical narrative and commercial interests in the tech industry. From OpenAI to NVIDIA, every "we achieved AGI" claim is accompanied by a quiet lowering of the standard. As information consumers, what we need is not to chase headlines but to build our own framework for judgment. AI technology is undoubtedly progressing rapidly. The new chips, Agent platforms, and inference optimization technologies released at GTC 2026 are real engineering breakthroughs. But packaging these advancements as "AGI achieved" is more of a market narrative strategy than a scientific conclusion. Staying curious, remaining critical, and continuously tracking primary sources is the best strategy to avoid being overwhelmed by the flood of information in this era of AI acceleration. Want to systematically track AI industry trends? Try to save key sources to your personal knowledge base and let AI help you organize, query, and cross-verify. [1] [2] [3] [4] [5] [6]

The Rise of AI Influencers: Essential Trends and Opportunities for Creators

TL; DR Key Takeaways On March 21, 2026, Elon Musk posted a tweet on X with only eight words: "AI bots will be more human than human." This tweet garnered over 62 million views and 580,000 likes within 72 hours. He wrote this in response to an AI-generated image of a "perfect influencer face." This isn't a sci-fi prophecy. If you are a content creator, blogger, or social media manager, you have likely already scrolled past those "too perfect" faces in your feed, unable to tell if they are human or AI. This article will take you through the reality of AI virtual influencers, the income data of top cases, and how you, as a human creator, should respond to this transformation. This article is suitable for content creators, social media operators, brand marketers, and anyone interested in AI trends. First, let's look at a set of numbers that will make you sit up. The global virtual influencer market size reached $6.06 billion in 2024 and is expected to grow to $8.3 billion in 2025, with an annual growth rate exceeding 37%. According to Straits Research, this figure is projected to soar to $111.78 billion by 2033. Meanwhile, the entire influencer marketing industry reached $32.55 billion in 2025 and is expected to break the $40 billion mark by 2026. Looking at specific individuals, two representative cases are worth a closer look. Lil Miquela is widely recognized as the "first-generation AI influencer." This virtual character, born in 2016, has over 2.4 million followers on Instagram and has collaborated with brands like Prada, Calvin Klein, and Samsung. Her team (part of Dapper Labs) charges tens of thousands of dollars per branded post. Her subscription income on the Fanvue platform alone reaches $40,000 per month, and combined with brand partnerships, her monthly income can exceed $100,000. It is estimated that her average annual income since 2016 is approximately $2 million. Aitana López represents the possibility that "individual entrepreneurs can also create AI influencers." This pink-haired virtual model, created by the Spanish creative agency The Clueless, has over 370,000 followers on Instagram and earns between €3,000 and €10,000 per month. The reason for her creation was practical: founder Rubén Cruz was tired of the uncontrollable factors of human models (being late, cancellations, schedule conflicts), so he decided to "create an influencer who would never flake." A prediction by PR giant Ogilvy in 2024 sent shockwaves through the industry: by 2026, AI virtual influencers will occupy 30% of influencer marketing budgets. A survey of 1,000 senior marketers in the UK and US showed that 79% of respondents said they are increasing investment in AI-generated content creators. To see the underlying drivers of this change, you must understand the logic of brands. Zero risk, total control. The biggest risk with human influencers is "scandal." A single inappropriate comment or a personal scandal can flush millions of brand investment down the drain. Virtual influencers don't have this problem. They don't get tired, they don't age, and they won't post a tweet at 3 AM that makes the PR team collapse. As Rubén Cruz, founder of The Clueless, said: "Many projects were put on hold or canceled due to issues with the influencers themselves; it wasn't a design flaw, but human unpredictability." 24/7 content output. Virtual influencers can post daily, follow trends in real-time, and "appear" in any setting at a cost far lower than a human shoot. According to estimates by BeyondGames, if Lil Miquela posts once a day on Instagram, her potential income in 2026 could reach £4.7 million. This level of output efficiency is unmatched by any human creator. Precise brand consistency. Prada's collaboration with Lil Miquela resulted in an engagement rate 30% higher than regular marketing campaigns. Every expression, every outfit, and every caption of a virtual influencer can be precisely designed to ensure a perfect fit with the brand's tone. However, there are two sides to every coin. A report by Business Insider in March 2026 pointed out that consumer backlash against AI accounts is rising, and some brands have already begun to retreat from AI influencer strategies. A YouGov survey showed that more than one-third of respondents expressed concern about AI technology. This means virtual influencers are not a panacea; authenticity remains an important factor for consumers. In the face of the impact of AI virtual influencers, panic is useless; action is valuable. Here are four proven strategies for responding. Strategy 1: Deepen authentic experiences; do what AI cannot. AI can generate a perfect face, but it cannot truly taste a cup of coffee or feel the exhaustion and satisfaction of a hike. In a discussion on Reddit's r/Futurology, a user's comment received high praise: "AI influencers can sell products, but people still crave real connections." Turn your real-life experiences, unique perspectives, and imperfect moments into a content moat. Strategy 2: Arm yourself with AI tools rather than fighting AI. Smart creators are already using AI to boost efficiency. Creators on Reddit have shared complete workflows: using ChatGPT for scripts, ElevenLabs for voiceovers, and HeyGen for video production. You don't need to become an AI influencer, but you need to make AI your creative assistant. Strategy 3: Systematically track industry trends to build an information advantage. The AI influencer field moves incredibly fast, with new tools, cases, and data appearing every week. Randomly scrolling through Twitter and Reddit is far from enough. You can use to systematically manage industry information scattered everywhere: save key articles, tweets, and research reports into a Board, use AI to automatically organize and retrieve them, and ask your asset library questions at any time, such as "What were the three largest funding rounds in the virtual influencer space in 2026?". When you need to write an industry analysis or film a video, the materials are already in place instead of starting from scratch. Strategy 4: Explore human-AI collaborative content models. The future is not a zero-sum game of "Human vs. AI," but a collaborative symbiosis of "Human + AI." You can use AI to generate visual materials but give them a soul with a human voice and perspective. Analysis from points out that AI influencers are suitable for experimental, boundary-pushing concepts, while human influencers remain irreplaceable in building deep audience connections and solidifying brand value. The biggest challenge in tracking AI virtual influencer trends is not too little information, but too much information that is too scattered. A typical scenario: You see a tweet from Musk on X, read a breakdown post on Reddit about an AI influencer earning $10,000 a month, find an in-depth report on Business Insider about brands retreating, and then scroll past a tutorial on YouTube. This information is scattered across four platforms and five browser tabs. Three days later, when you want to write an article, you can't find that key piece of data. This is exactly the problem solves. You can use the to clip any webpage, tweet, or YouTube video to your dedicated Board with one click. AI will automatically extract key information and build an index, allowing you to search and ask questions in natural language at any time. For example, create an "AI Virtual Influencer Research" Board to manage all relevant materials centrally. When you need to produce content, ask the Board directly: "What is Aitana López's business model?" or "Which brands have started to retreat from AI influencer strategies?", and the answers will be presented with links to the original sources. It should be noted that YouMind's strength lies in information integration and research assistance; it is not an AI influencer generation tool. If your need is to create virtual character images, you still need professional tools like Midjourney, Stable Diffusion, or HeyGen. However, in the core creator workflow of "Research Trends → Accumulate Materials → Produce Content," can significantly shorten the distance from inspiration to finished product. Q: Will AI virtual influencers completely replace human influencers? A: Not in the short term. Virtual influencers have advantages in brand controllability and content output efficiency, but the consumer demand for authenticity remains strong. Business Insider's 2026 report shows that some brands have begun to reduce AI influencer investment due to consumer backlash. The two are more likely to form a complementary relationship rather than a replacement one. Q: Can an average person create their own AI virtual influencer? A: Yes. Many creators on Reddit have shared their experiences of starting from scratch. Common tools include Midjourney or Stable Diffusion for generating consistent images, ChatGPT for writing copy, and ElevenLabs for generating voice. The initial investment can be very low, but it requires 3 to 6 months of consistent operation to see significant growth. Q: What are the income sources for AI virtual influencers? A: There are mainly three categories: brand-sponsored posts (top virtual influencers charge thousands to tens of thousands of dollars per post), subscription platform income (such as Fanvue), and derivatives and music royalties. Lil Miquela earns an average of $40,000 per month from subscription income alone, with brand collaboration income being even higher. Q: What is the current state of the AI virtual idol market in China? A: China is one of the most active markets for virtual idol development globally. According to industry forecasts, the Chinese virtual influencer market will reach 270 billion RMB by 2030. From Hatsune Miku and Luo Tianyi to hyper-realistic virtual idols, the Chinese market has gone through several development stages and is currently evolving toward AI-driven real-time interaction. Q: What should brands look for when choosing to collaborate with virtual influencers? A: It is crucial to evaluate three points: the target audience's acceptance of virtual personas, the platform's AI content disclosure policies (TikTok and Instagram are strengthening related requirements), and the fit between the virtual influencer and the brand's tone. It is recommended to test with a small budget first and then decide whether to increase investment based on data. The rise of AI virtual influencers is not a distant prophecy but a reality happening right now. Market data clearly shows that the commercial value of virtual influencers has been verified—from Lil Miquela's $2 million annual income to Aitana López's €10,000 monthly earnings, these numbers cannot be ignored. But for human creators, this is not a story of "being replaced," but an opportunity to "reposition." Your authentic experiences, unique perspectives, and emotional connection with your audience are core assets that AI cannot replicate. The key lies in using AI tools to improve efficiency, using systematic methods to track trends, and using authenticity to build an irreplaceable competitive moat. Want to systematically track AI influencer trends and accumulate creative materials? Try building your dedicated research space with and start for free. [1] [2] [3] [4] [5] [6] [7] [8] [9] [10] [11]