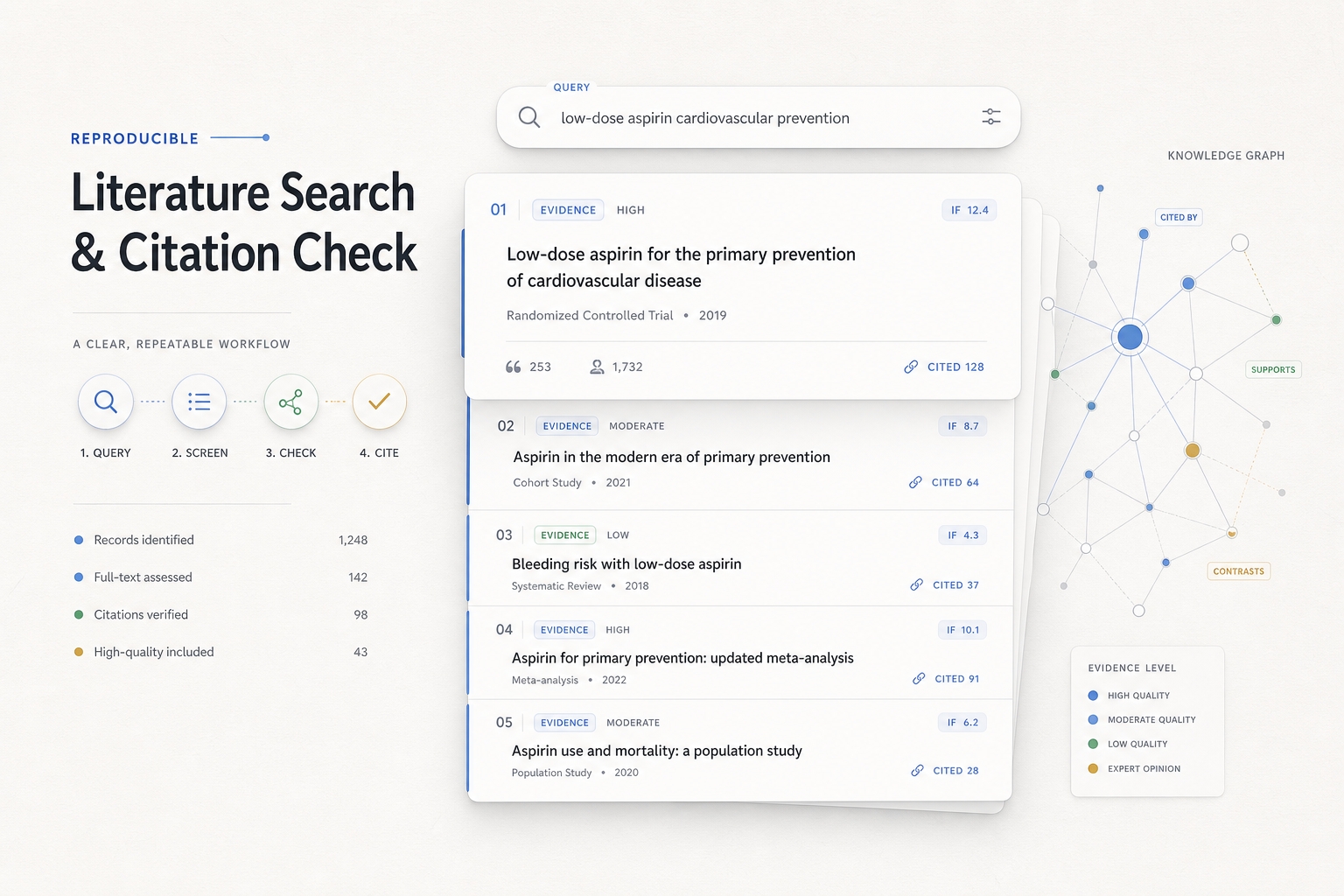

In-depth and reliable research on Bio/Med

Applicable Scenarios: Complete a round of biological/biomedical research literature retrieval and provide users with detailed and reliable output. Core Objectives: 1. After a user initiates a search, the system can break down the question and keywords. 2. Retrieve literature using publicly available and reproducible data sources. 3. Deduplicate, stratify, classify, and sort the search results. 4. Use clickable text citations in the response. 5. List complete literature information at the end of the document, and mark the IF (Information Impact Factor) with publicly available sources; if high-confidence verification is not possible, it must be marked as "Pending Verification" or "Not Detected".

Featured by

YouMind

Why we love this skill

This skill provides a rigorous biomedical literature review process, from multi-level searches to impact factor (IF) verification, ensuring highly reliable and reproducible results, making it a valuable tool for researchers.

Instructions

The core of this process is: first, use multiple queries to perform a reproducible initial screening in PubMed/NCBI E-utilities, then use PMID for deduplication and abstract evidence grading; only a small number of key documents enter the PMC/BioC full-text review; in-text citations must be clickable; complete bibliographic information must be listed at the end of the article; IF can only be conservatively checked through publicly accessible sources, and cannot be assumed to have JCR or local databases, cannot be guessed, and low-confidence matches cannot be treated as definitive results.

1. Preprocessing after a user initiates a search

1.1 First, analyze the user's question.

After receiving a user's question, the natural language question is first broken down into structured elements.

Must be identified:

• Research subjects: genes, proteins, drugs, channels, cell types, tissues, diseases, and models.

• Biological systems: humans, mice, rats, zebrafish, organoids, retina, brain regions, cell lines, etc.

• Relationship types: expression, regulation, function, mechanism, phenotype, death, survival, treatment, toxicity, development, degeneration, etc.

• Evidence requirements: Whether direct evidence, mechanistic evidence, full-text evidence, charts, dosage parameters, and experimental methods are required.

• Time range: unlimited time, the last 5 years, the last 1 year, the latest developments, and classic literature.

• Output types: short answer, representative literature, review summary, experimental design suggestions, evidence table, mechanism diagram.

Example:

User issue:

"Please help me find literature related to retinal organoid death."

Structured decomposition (example):

• Core models: retinal organoid, retina organoid, hPSC-derived retinal organoid, optic cup organoid.

• Phenotype: cell death, apoptosis, degeneration, survival loss, stress, necrosis.

• Related cells: photoreceptor, cone, rod, retinal ganglion cell, Müller glia.

• Potential mechanisms: oxidative stress, ER stress, mitochondrial dysfunction, hypoxia, inflammation, ferroptosis, necroptosis.

• Evidence objectives: Prioritize original studies that directly observe cell death/apoptosis/degeneration in human or animal retinal organoids; then look for literature on indirect mechanisms.

1.2 Keyword Segmentation Principles (Example)

Don't just write one search query. Prepare at least three categories of terms for each concept.

Category 1: Precise words.

• retinal organoid

• retina organoid

• human retinal organoid

• hPSC-derived retinal organoid

• iPSC-derived retinal organoid

The second category: synonyms and hypernyms.

• optic cup organoid

• 3D retinal culture

• stem cell-derived retina

• retinal differentiation

• retinal tissue model

The third category: mechanism and phenotypic terms.

• apoptosis

• cell death

• degeneration

• survival

• stress

• oxidative stress

• ER stress

• mitochondrial dysfunction

• hypoxia

• necroptosis

• ferroptosis

If the user specifies the cell type, add:

• photoreceptor

• cone

• rod

• retinal ganglion cell

• Müller glia

• bipolar cell

• amacrine cell

If the user specifies the species or origin, add:

• human

• mouse

• rat

• zebrafish

• hESC

• iPSC

• pluripotent stem cell

1.3 Generating hierarchical search queries

Generate at least 3–6 queries. Each query corresponds to a search objective.

First layer: Direct evidence retrieval.

Used to find literature that directly matches the target model and target phenotype.

```text

("retinal organoid" OR "retina organoid" OR "human retinal organoid") AND (apoptosis OR "cell death" OR degeneration)

```

Second layer: Extended model retrieval.

Used to capture literature where the author did not use the precise term "retinal organoid" but it is actually relevant.

```text

("optic cup organoid" OR "3D retinal culture" OR "stem cell-derived retina") AND (survival OR apoptosis OR stress)

```

Third layer: Mechanism-specific search.

Used to verify specific pathways or mechanisms.

```text

("retinal organoid" OR "retina organoid") AND ("oxidative stress" OR "ER stress" OR hypoxia OR mitochondria)

```

Fourth layer: Cell type specific search.

```text

("retinal organoid" OR "retina organoid") AND (photoreceptor OR cone OR rod OR "retinal ganglion cell") AND (death OR apoptosis OR degeneration)

```

Fifth layer: Disease model retrieval.

```text

("retinal organoid" OR "retina organoid") AND (disease OR degeneration OR dystrophy OR retinitis OR glaucoma)

```

Sixth layer: Review/background search.

```text

("retinal organoid" OR "retina organoid") AND (review OR protocol OR model)

```

2. What methods and websites were used for the search?

2.1 Preferred: PubMed / NCBI E-utilities

PubMed is the preferred tool for searching biomedical literature. Do not default to scraping PubMed pages. Use the NCBI E-utilities API instead.

2.1.1 ESearch: Retrieving PMID using a query

interface:

```text

https://eutils.ncbi.nlm.nih.gov/entrez/eutils/esearch.fcgi

```

parameter:

```text

db=pubmed

term=

retmode=json

retmax=20

sort=relevance

```

You can also sort by time:

```text

sort=pub+date

```

Example:

```text

https://eutils.ncbi.nlm.nih.gov/entrez/eutils/esearch.fcgi?db=pubmed&term=(%22retinal%20organoid%22%20OR%20%22retina%20organoid%22)%20AND%20(apoptosis%20OR%20%22cell%20death%22)&retmode=json&retmax=20&sort=relevance

```

Read from the return:

```text

esearchresult.idlist

```

This is the PMID list.

2.1.2 ESummary: Obtaining Literature Metadata

interface:

```text

https://eutils.ncbi.nlm.nih.gov/entrez/eutils/esummary.fcgi

```

parameter:

```text

db=pubmed

id=PMID1,PMID2,PMID3

retmode=json

```

Extracted fields:

• PMID

• title

• fulljournalname

• source/journal abbreviation

• pubdate

• authors

• DOI and PMCID in articleids

• volume, issue, pages

2.1.3 EFetch: Retrieving Summary and XML Details

interface:

```text

https://eutils.ncbi.nlm.nih.gov/entrez/eutils/efetch.fcgi

```

parameter:

```text

db=pubmed

id=PMID1,PMID2

retmode=xml

rettype=abstract

```

Extracted fields:

• ArticleTitle

• AbstractText

• Journal Title

• ISOAbbreviation

• ISSN / eISSN

• PubDate

• DOI

• PMCID

• MeSH terms

2.1.4 Batch Retrieval Strategy

Recommended process:

1. Call ESearch for each query.

2. For each query, select the first 5–20 results.

3. Merge all PMIDs.

4. Use PMID to remove duplicates.

5. Use ESummary / EFetch to retrieve metadata and abstract data in batches.

6. In the initial screening stage, only read the metadata and abstract; do not start by reading the full text.

────────────────

2.2 Second Phase: Full Review of PMC/BioC Documents

The full text will only be included in the following cases:

• Users request to read the full text carefully.

• The abstract is insufficient to determine the mechanism.

• Requires charts, experimental methods, concentrations, dosages, IC50, EC50, Kd, and Ki.

• A small number of key PMIDs have been identified and require review on a case-by-case basis.

2.2.1 PMID to PMCID

interface:

```text

https://www.ncbi.nlm.nih.gov/pmc/utils/idconv/v1.0/?ids=

```

If PMCID is returned, it means that PMC may have open full text.

2.2.2 Prioritize BioC JSON

interface:

```text

https://www.ncbi.nlm.nih.gov/research/bionlp/RESTful/pmcoa.cgi/BioC_json/

```

Advantages: Highly structured, suitable for extracting main text paragraphs.

2.2.3 Try PMC XML when BioC is unavailable

interface:

```text

https://www.ncbi.nlm.nih.gov/pmc/articles/

```

Skip when extracting the main text:

• references

• bibliography

• Acknowledgments

• author contributions

• competing interests

Full text review must include a stop criterion:

• Each document is scanned only once.

• By default, only paragraphs related to the question are extracted.

• If the target field is not matched, mark it as "No direct evidence found".

• Avoid repeatedly scraping keywords.

────────────────

2.3 bioRxiv / medRxiv

Used to supplement the latest preprints.

You can use the official API:

```text

https://api.biorxiv.org/details/biorxiv/YYYY-MM-DD/YYYY-MM-DD

https://api.biorxiv.org/details/medrxiv/YYYY-MM-DD/YYYY-MM-DD

```

You can also use a regular search as a supplement:

```text

site:biorxiv.org retinal organoid apoptosis

site:medrxiv.org retina organoid degeneration

```

Preprints must be labeled:

```text

This is a preprint and has not been peer-reviewed.

```

────────────────

2.4 Crossref/OpenAlex/Unpaywall

Used to complete DOI, open full-text address, and publication information.

Crossref:

```text

https://api.crossref.org/works?query.title=